Ascend.io and Looker Unify ETL Across Data Lakes, Warehouses, and Pipelines

Through Ascend’s new, native integration for Looker, data teams can now reach beyond the data warehouse to directly connect, explore, and unify live data from data lakes, warehouses, and pipelines

Ascend.io Expands Its Unified Data Engineering Platform and Accelerates the Economics of Data with the Release of Ascend Govern

An Industry First, Data Teams Can Now Calculate Data’s ROI to Demonstrate Business Impact with Integrated Cataloging, Costing, Lineage, Security and Reporting

TDWI Best Practices Report | Faster Insights from Faster Data

This TDWI Best Practices Report examines experiences, practices, and technology trends that focus on identifying bottlenecks and latencies in the data’s life cycle, from sourcing and collection to delivery to users, applications, and AI programs for analysis, visualization, and sharing.

Bloor Group Webinar | Beyond Pipelines – The Power of Data Orchestration

The combination of artificial intelligence and data pipelines opens remarkable new opportunities for data orchestration. The end result is a dynamic, automated paradigm for data movement, one that optimizes […]

Ascend Assembles Executive Advisory Council to Explore the Data-Driven Transformation of Business, Technology, and Society

Visionary Leaders from Microsoft, Google and More to Join Summit

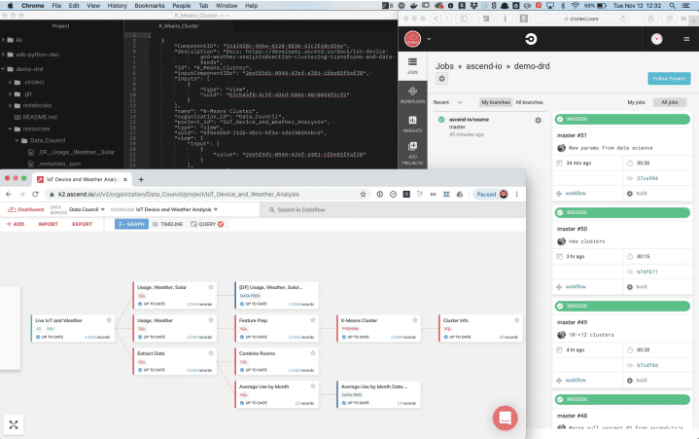

Ascend Declarative Pipeline Workflows Address the Challenges Facing DataOps Today

At Ascend, we are excited to introduce a new paradigm for managing the development lifecycle of data products — Declarative Pipeline Workflows. Keying off the movement toward declarative descriptions in the DevOps community, and leveraging Ascend’s Dataflow Control Plane, Declarative Pipeline Workflows are a powerful tool that allows data engineers to develop, test, and productionize data pipelines with an agility and stability that has so far been lacking in the DataOps world.

Four Anti-patterns in Balancing Data Teams

Let’s look at how managers of data teams can set the stage for a path that fuels speed and business results, by sorting out different aspects of what needs to get accomplished, and tagging four common mistakes as killer anti-patterns.

Whitepaper | An Assessment of Pipeline Orchestration Approaches

In this whitepaper, we compare and contrast both approaches to pipeline orchestration and the impact to data engineering.

The Anti-Pattern in Big Data

Today’s scale of data creation and ingestion has reached magnitudes that have fueled an Icarus-like obsession with data-driven business decisions. The desire for velocity in analytical processing, machine learning, and visualization has only enlarged the gap between the vision of a data-powered intelligence engine and the actual tools used for this concept.

Ascend Brings Declarative Pipelines to DataOps Workflows for Safe and Predictable Data Engineering at Scale

Declarative definitions, automated workflow integrations, and zero downtime pipeline deployments provide speed, flexibility, and stability across data lifecycle

Where is the Value: Code or Data?

While your data team likely includes people with all three of these points of view, what really matters is the position of the leaders, and the pace with which the team is adapting to the real needs of the business. So while we’re rolling the dice with the alphas, let’s take a moment to look at the two sources of value in this context: data and code.

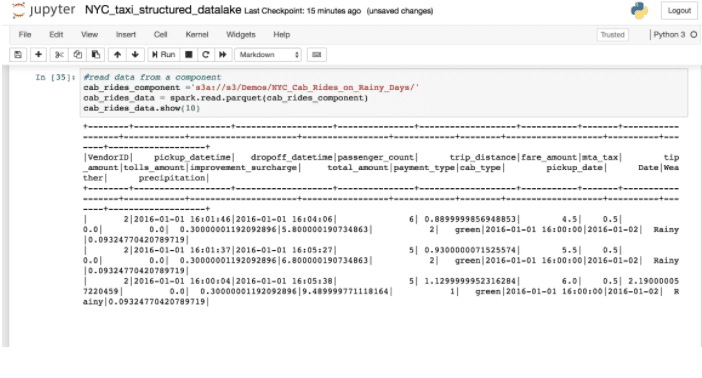

Building the Ascend Structured Data Lake

We run everything on Kubernetes and manage system state in MySQL plus a large scale blob store (Google Storage/S3/Azure Blob Storage).