Data ingestion, or more narrowly, extract and load, is the beginning of a complex process of converting raw data into a valuable business asset. While it is a necessary step in building any data product, it is also a step that can incur high costs and delays early in the data management process. On top of that, business value is created only after the data has been ingested. Data in its most basic form is not valuable until we do the hard work of transforming it to a state where the business can drive insights. In this article, we make the case for free data ingestion to remove a barrier to the value creation transformation steps.

The Relative Cost of Data Ingestion

The cloud providers are pushing infrastructure costs down while simplifying access to a wide variety of data in disparate locations. This should mean that ingestion costs are also dropping. However, for most data teams, those costs are rising.

Ingestion Remains a Hard Problem to Solve

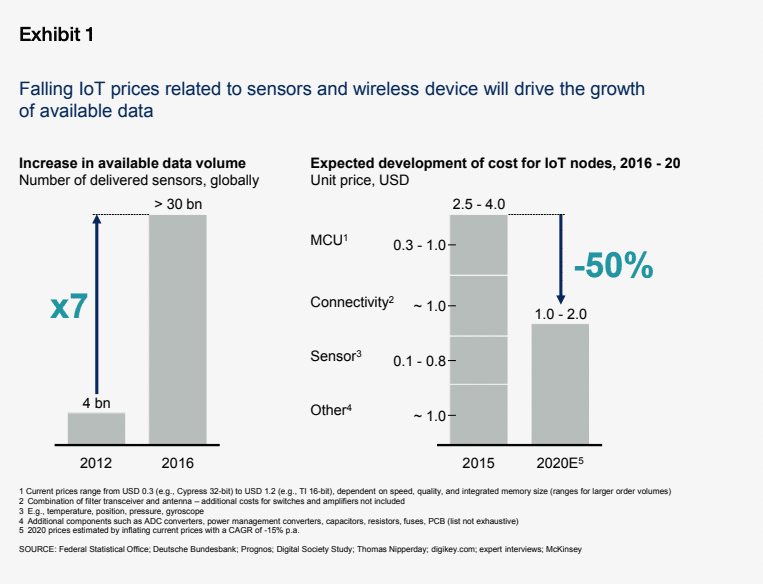

The explosion of data sources (IoT, APIs, cloud applications, on-premise data, various databases, etc.), the increase in computing power and improvements in storage, and the advancements in modern AI/ML tools are driving the demand for more and more data.

Despite efforts by the clouds and major data platform players to improve ingestion friction, it remains a hard problem to solve. A survey by Forbes found that 95 percent of businesses face some kind of need to manage unstructured data.

The vast increase in the amount, diversity, and complexity of data—including technical differences between source systems and Change Data Capture (CDC) strategies—makes the process of accessing it overwhelming. This has greatly expanded the opportunities for ingest-centric vendors to monetize this pain point with single-purpose tools.

Data Management Supply Chain Gaps

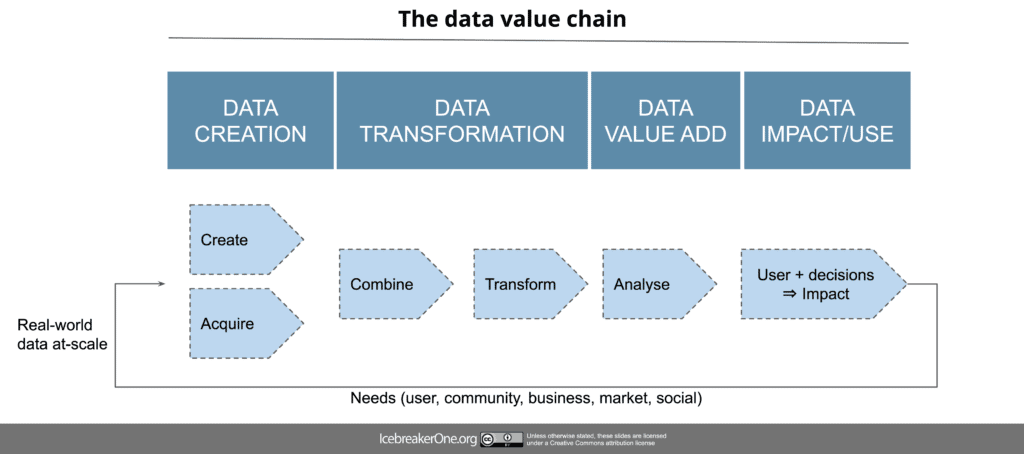

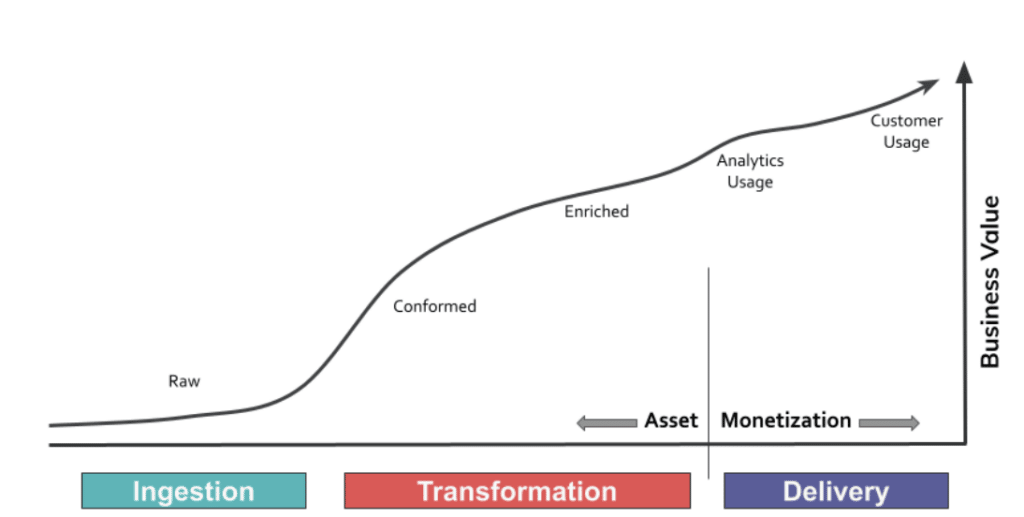

Like any other product, data has a value cycle beginning with raw materials (ingestion), increasing value through a manufacturing process (transformation), and peaking with delight in customers’ hands (delivery).

Architectures that source individual tools for each of these stages also incur costs from individual vendors, where each seeks to maximize their profits in their niche. This is a counter-productive recipe for a costly and complex supply chain that can be difficult or impossible to optimize.

Why Should Data Ingestion Be Free?

Whether the data is created by the organization, it was purchased, or comes with a free-use license, “raw” data always requires work to become useful. For example, the simplest jobs involve removing some columns, converting text fields into date objects, or other basic transformations.

More complex work includes merging new data sets with other established data to create new views for the business to consume. Regardless of the level of transformation, the business value of the data when it first enters a system is zero if it is not immediately ready to be consumed.

The parallels with physical product supply chains are enlightening. For many companies producing physical products, supply chain consolidation is a key competitive advantage. These companies reduce the number of profit centers, leverage scale for efficiency, and invest in automation.

At full maturity, raw materials or individual product components become commoditized as the vendors converge on standardized designs, specifications, and technologies while pricing becomes increasingly transparent. As a result, costs to the customer are reduced and vendor profits are spread across the supply chain.

There is solid evidence that the data management supply chain is ripe for similar platform/vendor consolidation:

- Infrastructure automation has already standardized on Kubernetes (K8s)

- Massively parallel job automation via Spark is readily available in all clouds

- A shrinking number of transformation languages (SQL, python) are becoming ubiquitous and have large pools of skilled workers

- Cloud data storage and compute capacity are nearly limitless

When the data management supply chain is consolidated and data ingestion costs are removed, it generates a ripple effect that can unlock business value. Data ingestion is a bottleneck in creating and iterating on data products. Real business value is generated post-ingestion using transformations, reusing key dataflows, and sharing and updating master data—all while optimizing the compute and infrastructure configuration.

Final Thoughts About Free Data Ingestion

Today, companies are investing in several tools to manage the various functions in the data management supply chain, including data ingestion. While these technologies enable organizations to take action on the data and create impact, they don’t work cohesively—adding friction, isolation, and costs to the data management process.

By ingesting data with the same tool that also creates value through transformation to build an end-to-end, integrated data management process, data teams are able to remove the ingestion cost barriers and accelerate business value creation.