DATA PIPELINE AUTOMATION

Build Data Pipelines 10x faster at 67% less cost.

Powering the world's best data teams, from next-generation startups to established enterprises.

Finally, one tool to rule them all.

Data Ingestion

Connect and ingest data to your lake or warehouse:

- 250+ pre-built connectors

- Custom python framework

- Support of all major CDC strategies

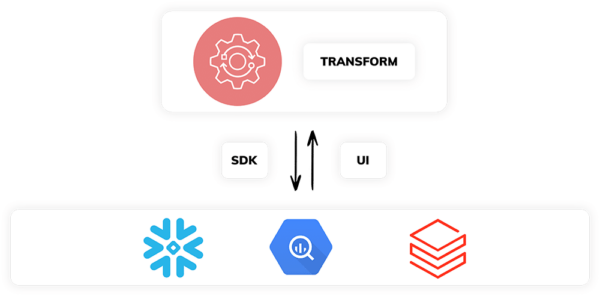

Data Transformation

Push-down SQL and Python to your favorite lake or warehouse:

- Simple, declarative paradigm

- Use rich UI or program thru your IDE

- Integrate fully into your current CI/CD practices

Reverse-ETL

Share data internally or externally with a few clicks:

- Pre-built connectors for external data activation

- Easy Publish/Subscribe across pipelines for data re-use

- Live Data Share to connect across different lakes, warehouses, and clouds

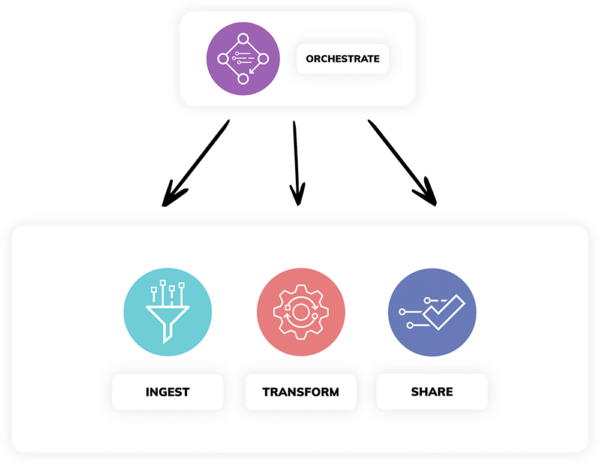

Data Orchestration

Dynamically generated orchestration as you build:

- Pipelines run when new data arrives

- Automatic change propagation throughout multiple linked pipelines

- End-to-end lineage across lakes and warehouses

Consolidate Tools. Spend Less.

THE ASCEND DIFFERENCE

Get data pipeline superpowers from the world’s first DataAware™ control plane.

TIME BACK IN YOUR DAY

Ditch the maintenance, impact analysis, and change management of traditional pipelines.

LOTS OF MONEY SAVED

Automate data pipeline best practices and watch the savings rack up.

CONFIDENCE IN YOUR PIPELINES

Know what’s happening in your pipelines at every second with live UI updates and drilldowns for all your pipelines.

Don't just take our word for it...

Read the research.

Leader and Outperformer in the GigaOm Radar Report

Raising the Bar on Data Pipelines

Your roadmap to master the complex world of data pipelines:

- Discover top vendors and competitive differences.

- Understand key evaluation criteria for data pipeline software.

- Learn why Ascend stands as a beacon of innovation and performance.

GENERATING UP TO 2,781% ROI IN ESG THIRD-PARTY ANALYSIS

Get a 700% Increase in Data Engineering Productivity

Learn why Ascend customers are experiencing:

- 80% less effort to build data pipelines

- 25% of their time freed from maintaining the data stack every week

- At least $156k savings on data pipeline tools every year

Explore ways to connect with us — in a city or on a screen near you.

Our Thoughts on the Data Space

Introducing Project Inception: The Next Evolution in Data Automation

At Ascend, we believe it’s time to rethink data engineering from the ground up. As the world of data continues to evolve at a breakneck pace, we are thrilled to

The Symbiotic Relationship Between AI and Data Engineering

Explore the relationship between AI and data engineering: How do they impact each other, and what does the future of their collaboration look like?

AI Data Platform: Key Requirements for Fueling AI Initiatives

Unlock AI’s potential with the right data platform: Explore AI data platform requirements, cloud capabilities, and automation for innovation.

Get Started for Free

Play around with it first. Pay and add your team later.

Docs

Dive deeper into Ascend with our comprehensive developer docs. Explore now to level up your skills.

Release Notes

Check our release notes to explore new features and enhancements. Learn what's new today!

The DataAware Podcast

Join us as we explore trends, best practices, and real-world use cases. Stay informed, stay DataAware!